2025

Juried Group Exhibition — ArtPrize

Now Gallery · Grand Rapids, MI

2024

Creative Showcase — 19th International Conference on the Arts in Society

Hanyang University · Seoul, SK

2023

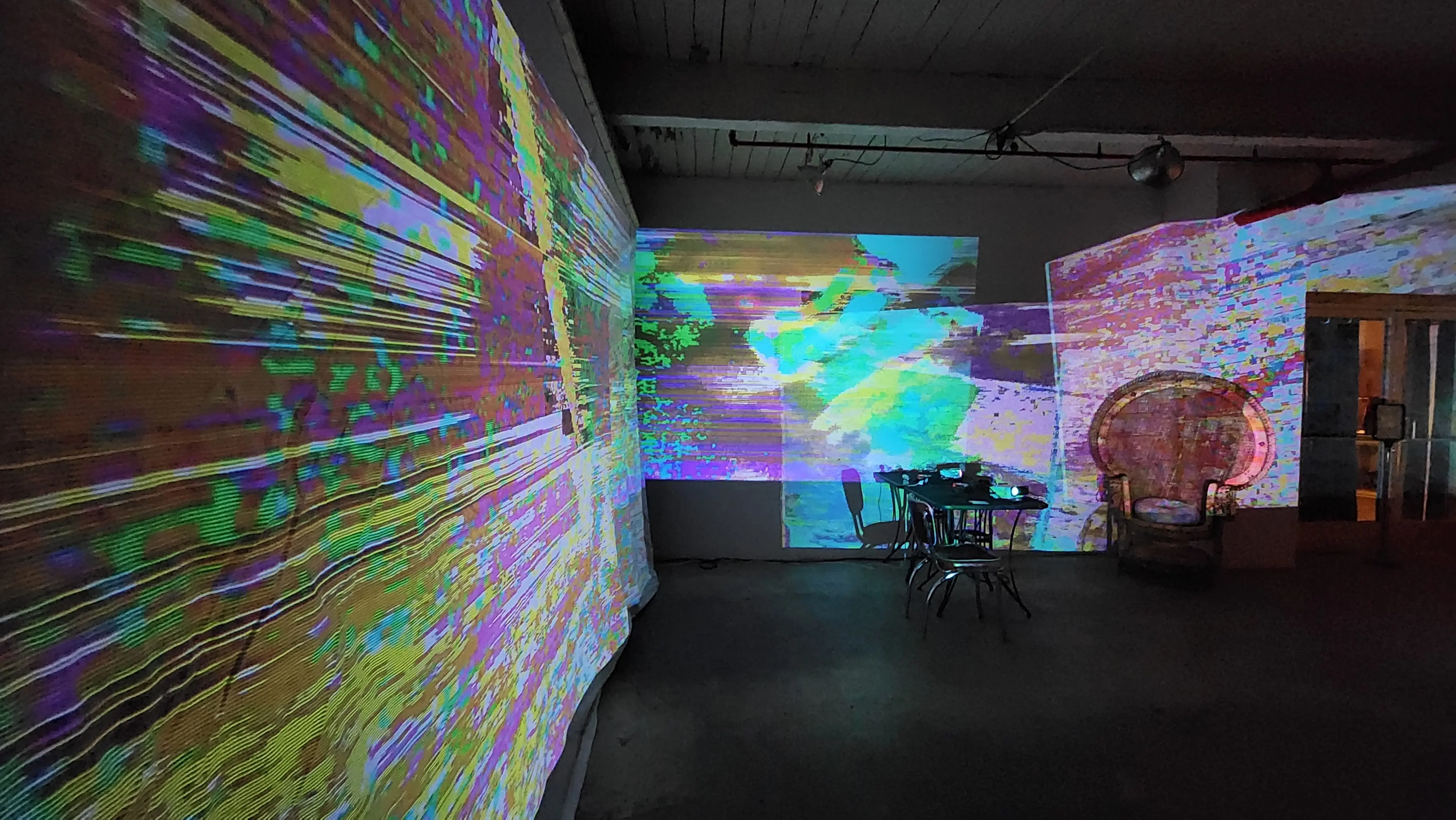

Juried Group Exhibition — Performing Media Festival

Indiana University South Bend

2023

Solo Exhibition — Gannett Gallery

SUNY Polytechnic Institute · Utica, NY

2021

Creative Showcase — 17th International Conference on the Arts in Society

Online · Perth, AU

2021

Juried Group Performance — NYC Electroacoustic Music Festival

New York, NY

2021

Concept 2021 — Czong Institute of Contemporary Art

Gimpo · South Korea

2020

Creative Showcase — 16th International Conference on the Arts in Society

Online · Galway, IE

2020

The Loop for Good — Fashion Institute of Technology

New York, NY

2019

Juried Solo Exhibition — Ground Level Platform

Chicago, IL

2018

Solo Exhibition — Phaze2 Gallery, Shepherd University

Shepherdstown, WV